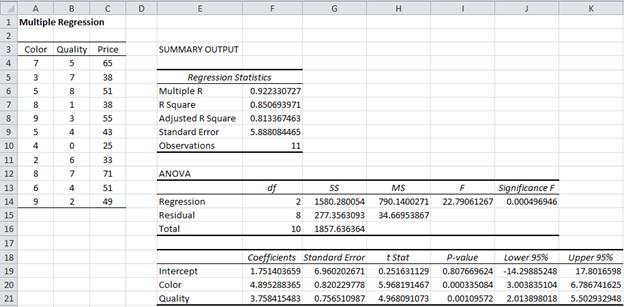

Basically, we have removed what is not needed which typically happens in real world data sets too. The value of R-squared 0.93 still explains the relationship with the response variable in spite of having only one predictor variable. Results of the simple linear regression model (only one predictor variable): Let's remove those variables for better model precision: This indicates the relationship between the response variable and the three independent variables - population of the city, monthly income of riders, and average parking rates per month is not really significant. It can be seen from the results of the first model (multiple linear regression - where all the four predictor variables are used), the p-values of all the predictor variable except the price per week are greater than the chosen significance level 0.05. This assumption will be however validated as ours is a linear model and we have the constant β0 which takes care of it by forcing the mean of residuals to zero.įitting a new model - does it makes sense? It can be seen that the mean of residuals/ error terms is very tiny and can be approximated to 0. And all the given variables were included in the model which lead to a good R2 value (0.95).

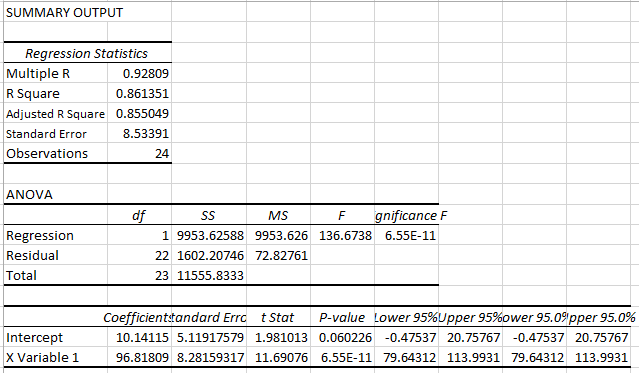

This has to be taken for granted as there was no precursor information about the data set. All independent variables are uncorrelated with the error term. But if interpreting coefficients, p-values are of major concern, then Variance Infation Factor helps in finding out multicollinearity.Ħ. Just like the case of heteroscedasticity, if predictions are the main goal, evaluating mutlicollinearity can be skipped and I have skipped it. This means no independent variable is a perfect linear function of other explanatory variables. It is out of scope for this analysis as the data set is constrained with respect to the number of independent variables. To randomize this serial correlation, independent variables which can be attributed to such effect has to be added and required subject matter expertise. If there is a pattern and one error term (generally the order in which observations are collected) helps in predicting the next error term, then there is problem. Observations of the error term are uncorrelated with each other. Note: To remove impure form of heteroscedasticity, subject-matter expertise is needed as the main concern is finding out the key variables which are reflected in the non-constant variance.Ĥ. However, this only matters when understanding the effects of independent variables and not if prediction making is the main goal. To remove heteroscedasticity (pure), either re-defining the variables or weighted regression technique (How in MS-Excel? - Coming soon) or Dependent variable transformation can be applied and convert the analysis to homscedastic. This made me easy to find out a pattern which is not the desired outcome when checking for goodness of fit via residual plots. A different interpretation is that it can be seen that for fitted values < 140000 of the Number of weekly riders variable, residuals are -ve while they are +ve in between. In our residual plot, the variance fans in which is a sign of heteroscedasticity. The residual vs fitted values plot tells about it. This means the residuals must have a constant variance across all the observations. The maximum value is 2.20 standard deviations away from the mean with which we can move ahead. Let's check that with a calculation of standardized residuals. The residuals mustn't be more than 3 standard deviations away from the residual mean. There should not be any outliers present. The first assumption can be validated from the scatter plots and the results of the regression which showcases different coefficients and the fitted values are calculated using the linear equation. There must be a linear relation between independent and dependent variables. See their site for resources they have developed for teaching data analytics in introductory accounting.1. Ohio Jennifer Cainas, CPA, DBA, is a clinical professor at the University of South Florida in Tampa and Tracie Miller-Nobles, CPA, is an associate professor of accounting at Austin Community College in Austin, Texas. Wendy Tietz, CPA, CGMA, Ph.D., is a professor of accounting at Kent State University in Kent. The next time you teach cost behavior, consider expanding your students' Excel skills by teaching them how to perform a simple linear regression, one of the many options within the Data Analysis function. Now that you have the regression results, you can discuss with the students the key pieces of information being displayed, including the coefficients (the intercept representing the fixed costs, and the X variable 1 representing the variable costs) and how to interpret the R square and adjusted R square values.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed